The Agentic Platform Model Versioning Reckoning of 2026: Why Backend Engineers Must Build Per-Tenant LLM Version Pinning and Drift Detection Pipelines Now

Something quietly broke in production last quarter, and most engineering teams never saw it coming. No deployment went out. No configuration changed. No engineer touched the stack. And yet, dozens of enterprise customers started filing support tickets complaining that their AI-powered workflows were producing subtly different outputs, making different decisions, and in some cases, behaving in ways that directly contradicted months of carefully tuned prompt engineering. The culprit? An upstream model update that no one announced, no one versioned, and no one built a detection pipeline for.

Welcome to the Model Versioning Reckoning of 2026. If you are a backend engineer working on any platform that exposes agentic AI capabilities to enterprise customers, this is the most important infrastructure problem on your roadmap right now. And the clock is already ticking.

The Agentic Platform Explosion Changed the Stakes Permanently

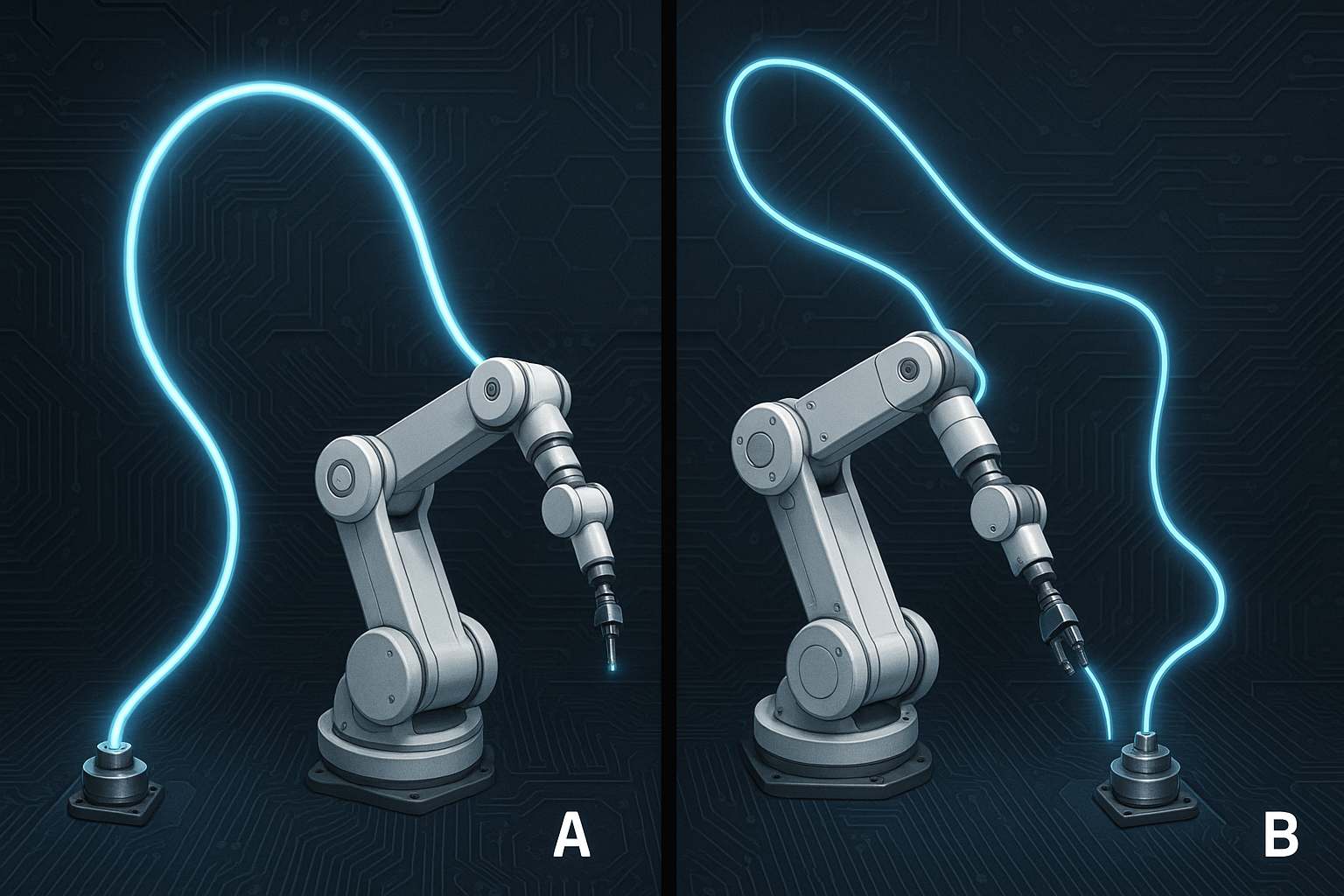

For most of 2024 and 2025, the dominant AI integration pattern was relatively forgiving: a user types a prompt, a model returns a response, a human reads it and decides what to do next. Behavioral drift in that loop was annoying but manageable. A slightly different tone or a subtly reworded answer rarely broke anything downstream.

That era is functionally over. In 2026, the dominant integration pattern is agentic. Autonomous agents are now embedded inside enterprise workflows that span CRM updates, financial reconciliation, legal document review, customer escalation routing, and supply chain decision-making. These agents do not wait for a human to review each output before acting. They call tools, write to databases, trigger downstream APIs, and hand off to other agents in multi-agent pipelines.

In this environment, a 3% shift in how a model interprets an instruction is not a nuisance. It is a production incident. And the terrifying part is that most platforms are completely blind to when it happens.

The Silent Update Problem: What Is Actually Happening

Major model providers, including both frontier API vendors and open-weight model hosts, regularly update the models sitting behind stable-looking endpoint aliases. A call to a named model version like gpt-4o, claude-3-5-sonnet, or a self-hosted quantized Llama variant can silently resolve to a different set of weights following a provider-side update, a fine-tune refresh, a safety layer adjustment, or an infrastructure migration.

The problem compounds across several dimensions:

- Prompt sensitivity amplification: Agentic systems use structured prompts with tool schemas, memory injections, and chain-of-thought scaffolding. Even minor shifts in how a model interprets JSON instructions or tool-call syntax can cascade into completely different agent behavior.

- Multi-tenant blast radius: On a SaaS agentic platform, a single upstream model change does not affect one customer. It affects every tenant simultaneously, often in different ways depending on their specific system prompts and tool configurations.

- Latent drift: Because many agentic workflows produce outputs that are only reviewed periodically or by exception, behavioral drift can persist for days or weeks before a human notices. By then, corrupted outputs may have propagated through downstream systems.

- No rollback primitive: Unlike a bad code deploy, you cannot simply roll back a model update you did not control. If you have no version snapshot and no behavioral baseline, you cannot even prove the model changed, let alone restore prior behavior.

Why This Is Specifically a Backend Engineering Problem

It is tempting to frame model versioning as a vendor responsibility or an MLOps concern. It is neither, at least not exclusively. The reality is that the platform layer sits between the model provider and the enterprise tenant, and that is exactly where the versioning contract must be enforced.

Backend engineers building agentic platforms are now responsible for a guarantee that most teams have not explicitly signed up for: that the behavioral characteristics of the AI system a tenant configured and validated last month are the same behavioral characteristics operating on their data today. This is not a product promise. It is an infrastructure commitment, and it requires deliberate engineering to uphold.

The good news is that this is a solvable systems problem. The bad news is that almost no platform has solved it yet.

The Four Pillars of a Production-Grade Version Pinning Architecture

1. Per-Tenant Model Version Manifests

Every tenant on your platform should have an explicit, stored model version manifest that records exactly which model identifier, provider, and (where available) checkpoint hash was active at the time they configured and validated their agent workflows. This manifest must be treated as a first-class configuration artifact, versioned in your database alongside system prompts, tool definitions, and memory configurations.

When a provider releases a new model version, your platform should not transparently upgrade existing tenants. New model versions should be available as opt-in migrations, with a validation gate that tenants or your platform's automated test suite must clear before the new version becomes the active runtime for that tenant's agents.

Concretely, your data model needs something like this:

tenant_id: the account identifieragent_id: the specific agent configurationpinned_model_id: the exact versioned model endpoint, not an aliaspinned_at: timestamp of when this version was lockedbehavioral_baseline_id: foreign key to a stored behavioral snapshot (more on this below)drift_alert_threshold: tenant-configurable sensitivity for drift detection alerts

2. Behavioral Baseline Snapshotting

Version pinning alone is not sufficient if you cannot detect when a pinned version itself changes beneath you. Providers do not always bump version identifiers when they make incremental updates to safety layers, tokenizer behavior, or instruction-following fine-tunes. You need a behavioral fingerprint that is independent of the model identifier.

A behavioral baseline snapshot is a stored set of canonical prompt-response pairs, sampled from a tenant's actual production traffic (with appropriate anonymization and consent controls), that characterizes how the model behaves on that tenant's specific workload. This is not a generic benchmark. It is a workload-specific behavioral fingerprint.

Building this requires:

- A sampling pipeline that periodically captures representative production inputs, stratified by workflow type and agent step

- A deterministic replay mechanism that can re-run those inputs against the current model and compare outputs

- A semantic similarity layer (often a smaller, stable embedding model) that can detect meaningful behavioral differences beyond surface-level token variation

- A structured diff format that can express what changed (tone, instruction-following fidelity, tool-call argument formatting, refusal rate, etc.) in a way that is actionable for engineering and interpretable for customers

3. Continuous Drift Detection Pipelines

Baseline snapshots are only valuable if you are continuously comparing live behavior against them. This means running a drift detection pipeline on a scheduled cadence, ideally daily or more frequently for high-stakes enterprise tenants.

An effective drift detection pipeline in 2026 looks like this:

- Shadow replay jobs: Asynchronously replay a sample of recent production inputs against both the pinned model and any candidate updated model, storing both outputs without affecting live traffic

- Semantic divergence scoring: Use cosine similarity on embeddings, plus structured output schema validation, to score how far the new outputs deviate from the baseline distribution

- Behavioral dimension tracking: Go beyond raw similarity and track specific behavioral dimensions: refusal rate, average output length, tool-call accuracy, JSON schema compliance, and reasoning chain structure

- Tenant-scoped alerting: When divergence exceeds a configurable threshold for a given tenant's agent, fire an alert to your platform's incident system and optionally to the tenant's technical contact, before any migration happens

The key architectural principle here is that drift detection must be proactive, not reactive. You should know a model has drifted before a tenant's production workflow encounters the changed behavior, not after a support ticket arrives.

4. Controlled Migration Gates with Rollback Contracts

When a new model version is available and drift detection has characterized the delta, tenants need a structured migration path rather than a surprise upgrade. This is where most platforms currently have a critical gap.

A production-grade migration gate includes:

- A staging environment replay that runs the tenant's full agent workflow suite against the new model version using synthetic or anonymized production data

- A human-in-the-loop review step for enterprise tenants in regulated industries, where a platform engineer or the tenant's AI operations team can inspect the behavioral diff and approve or reject the migration

- A canary rollout mechanism that routes a small percentage of live traffic to the new model version while keeping the majority on the pinned version, monitoring divergence in real time

- A rollback contract: a documented, tested procedure for reverting a tenant to their last known-good model version and behavioral baseline within a defined SLA window

This last point deserves emphasis. You cannot offer enterprise customers a meaningful AI reliability SLA if you have no rollback primitive. In 2026, enterprise procurement teams are increasingly asking for exactly this kind of contractual guarantee before signing platform agreements.

The Multi-Tenant Complexity Layer

Everything described above becomes significantly more complex in a multi-tenant SaaS context, which is the dominant deployment model for agentic platforms. A few specific challenges deserve attention.

Model Version Sprawl

If every tenant can be on a different pinned model version, and your platform supports multiple model providers, you will quickly accumulate a matrix of active model versions that your infrastructure must support simultaneously. This requires a model version registry that tracks which versions are currently in active use across your tenant base, which versions are eligible for deprecation, and what the migration path looks like for tenants on older versions.

Deprecation of old model versions is itself a delicate operation. Providers eventually sunset older API endpoints, which means you may be forced to migrate tenants off a pinned version even if they have not opted in. Your architecture must account for this with advance notice pipelines and automated migration readiness assessments.

Differential Drift Across Tenants

A model update that has negligible impact on one tenant's workload may be catastrophic for another's. A tenant running a legal contract analysis agent on highly structured prompts may be far more sensitive to changes in instruction-following fidelity than a tenant running a customer FAQ agent with loose, conversational prompts. Your drift scoring must be workload-relative, not based on a universal threshold.

Audit and Compliance Requirements

Enterprise customers in financial services, healthcare, and legal domains are now subject to AI governance regulations that require them to demonstrate that the AI systems operating on their data behave consistently and predictably over time. Your version pinning and drift detection infrastructure is not just an engineering reliability tool. It is a compliance artifact. Audit logs of model version history, drift events, migration approvals, and rollback operations need to be retained and exportable in formats that satisfy regulatory review.

What Happens to Platforms That Do Not Build This

The trajectory for platforms that ignore this problem is already visible in early 2026. Enterprise churn is increasingly driven not by feature gaps but by reliability failures that customers cannot explain or reproduce. When a tenant's AI-powered workflow starts producing wrong outputs and neither the tenant nor the platform can identify why or when it changed, the trust relationship breaks down in a way that is very difficult to recover from.

More acutely, as agentic systems take on higher-stakes decisions, the legal exposure from undocumented behavioral changes is growing. A platform that cannot demonstrate that its AI system behaved consistently and within contracted parameters will face increasingly difficult conversations with enterprise legal and procurement teams. In some regulated verticals, this is already a disqualifying gap.

The platforms that are winning enterprise AI contracts in 2026 are the ones that can answer a very simple question with documented evidence: "Can you prove that the AI system operating on our data today is behaviorally equivalent to the one we validated six months ago?" Most platforms currently cannot. That is the gap this architecture closes.

A Practical Roadmap for Backend Engineering Teams

If you are starting from zero on this problem, here is a pragmatic sequencing of the work:

- Week 1 to 2: Audit your current model invocation layer. Identify every place in your codebase where a model is called via an alias rather than an explicit versioned identifier. This is your immediate blast radius.

- Week 3 to 4: Implement a model version registry and begin recording the active model version for every agent execution in your observability logs. You cannot detect drift you do not log.

- Month 2: Build the per-tenant version manifest data model and the baseline snapshotting pipeline. Start with your top 10 enterprise accounts.

- Month 3: Implement semantic divergence scoring and wire it into your monitoring stack. Set conservative alert thresholds initially and tune based on signal quality.

- Month 4 to 5: Build the migration gate workflow, including staging replay, canary routing, and rollback procedures. Document the rollback SLA and surface it to your enterprise customer success team.

- Month 6 and beyond: Extend to all tenants, integrate with your compliance audit log pipeline, and begin offering model version stability as a documented, contractual feature of your enterprise tier.

Conclusion: Version Pinning Is the New Uptime SLA

For the previous generation of software infrastructure, the foundational reliability promise was uptime. Enterprise customers needed to know your service would be available when they needed it. That problem is largely solved. The infrastructure tooling, the redundancy patterns, and the SLA frameworks are mature and well understood.

For the current generation of agentic AI infrastructure, the foundational reliability promise is behavioral consistency. Enterprise customers need to know that the AI system they validated, audited, and deployed is the same AI system running on their production data next month. That problem is not solved. The tooling is nascent, the patterns are emerging, and most platforms have not yet recognized it as a first-class infrastructure concern.

The backend engineers who build per-tenant LLM version pinning and drift detection pipelines in 2026 are not just solving an operational headache. They are building the infrastructure primitive that makes enterprise agentic AI trustworthy at scale. That is foundational work, and it is overdue. The reckoning is here. The question is whether your platform will be ahead of it or scrambling to catch up when the first major silent update breaks something your customers cannot ignore.