Synchronous vs. Asynchronous Agentic Workflow Execution: Which Model Holds Up When Per-Tenant Task Queues Spike Beyond Foundation Model Throughput Limits

Here is a scenario that every platform engineering team running multi-tenant AI infrastructure has either already lived through or is about to: it's 9:07 AM on a Tuesday, three of your largest enterprise tenants simultaneously trigger high-volume agentic pipelines, and within 90 seconds your foundation model provider is returning 429 Too Many Requests errors faster than your retry logic can absorb them. Your synchronous agents are blocking. Your SLAs are on fire. And your on-call engineer is staring at a dashboard that looks like a seismograph during an earthquake.

This is not a theoretical edge case anymore. As of 2026, agentic AI workflows have moved from experimental to production-critical across industries ranging from legal tech to financial services to DevOps automation. The architectural decisions you made when these systems were low-traffic prototypes are now being stress-tested at scale. And the single most consequential decision, one that most teams made almost accidentally, is whether their agent execution model is synchronous or asynchronous.

This article goes deep on that choice, specifically in the context of per-tenant task queue spikes that exceed foundation model throughput limits. We will look at how each model behaves under pressure, where each one breaks, and which one actually holds up when the traffic hits.

Setting the Stage: What "Per-Tenant Task Queue Spikes" Actually Means

Before comparing execution models, it is worth being precise about the failure scenario we are designing around. In a multi-tenant agentic platform, each tenant (an enterprise customer, an internal business unit, or an API subscriber) generates its own stream of agent tasks. These tasks might be document analysis jobs, code review pipelines, research synthesis workflows, or tool-calling chains that span multiple LLM calls per task.

A "spike" occurs when a tenant's task submission rate temporarily exceeds the system's ability to dispatch those tasks to the underlying foundation model. The causes are varied:

- Batch imports: A tenant uploads 10,000 contracts for simultaneous analysis.

- Triggered automation: A CI/CD event fans out 500 parallel code review agents.

- Cascading sub-tasks: A single orchestrator agent spawns dozens of specialist sub-agents, each of which spawns more.

- Cross-tenant coincidence: Multiple large tenants spike at the same time, exhausting shared rate-limit budgets.

The throughput ceiling is imposed at multiple layers: the foundation model provider's tokens-per-minute (TPM) and requests-per-minute (RPM) limits, your own infrastructure's concurrency caps, and the latency characteristics of the model itself. In 2026, even with the improved throughput of frontier models like GPT-5-class and Gemini Ultra 2-class systems, these ceilings are real, especially when you are serving dozens of tenants simultaneously from a shared model endpoint.

Synchronous Agentic Execution: The Mental Model and Its Strengths

In a synchronous execution model, each agent task occupies a thread (or coroutine) from invocation to completion. The calling process waits. The agent runs its reasoning loop, makes tool calls, receives responses, and eventually returns a result. The entire chain is a single, unbroken unit of execution from the perspective of the orchestration layer.

Where Synchronous Execution Genuinely Shines

Synchronous execution is not inherently bad. In the right context, it is actually the simpler, more debuggable, and more predictable choice. Its strengths include:

- Simplicity of control flow: The execution graph is a call stack. Errors propagate naturally. Timeouts are straightforward to reason about. Developers familiar with standard request/response patterns can onboard quickly.

- Low-latency single-task scenarios: When a single user is waiting for a single agent to complete a bounded task, synchronous execution minimizes overhead. There is no queue serialization, no broker round-trip, no polling delay.

- Strong consistency guarantees: Because each task holds its execution context continuously, state management is trivial. You do not need to serialize and deserialize agent state between steps.

- Easier tracing and observability: A single trace ID covers the entire agent lifecycle. Tools like OpenTelemetry integrate cleanly because there is no distributed handoff to stitch together.

How Synchronous Execution Fails Under Throughput Pressure

The failure modes of synchronous execution under spike conditions are both predictable and brutal. When your foundation model provider starts throttling, every blocked synchronous agent holds a live thread or process resource. You get a cascading resource exhaustion pattern that looks like this:

- Model provider returns

429or increases latency significantly. - Synchronous agents begin blocking on retries, holding threads open.

- Thread pool exhaustion occurs; new task submissions start queuing at the infrastructure layer.

- The queue grows faster than it drains, because the drain rate is capped by the model's throttled throughput.

- Memory pressure increases as each blocked thread holds its full execution context.

- Eventually, the system either crashes, drops tasks, or begins returning timeouts to tenants.

The critical insight here is that synchronous execution conflates task acceptance with task execution capacity. The moment you accept a task, you are committing a resource to it. When execution capacity is constrained by an external throttle (the foundation model), that resource stays committed and idle, burning your infrastructure budget while delivering zero throughput.

For per-tenant fairness, the situation is even worse. A single large tenant's spike can monopolize the thread pool, starving other tenants entirely. You can implement per-tenant thread limits, but this adds significant complexity and still does not solve the fundamental problem: threads are being held by tasks that cannot make progress.

Asynchronous Agentic Execution: The Mental Model and Its Strengths

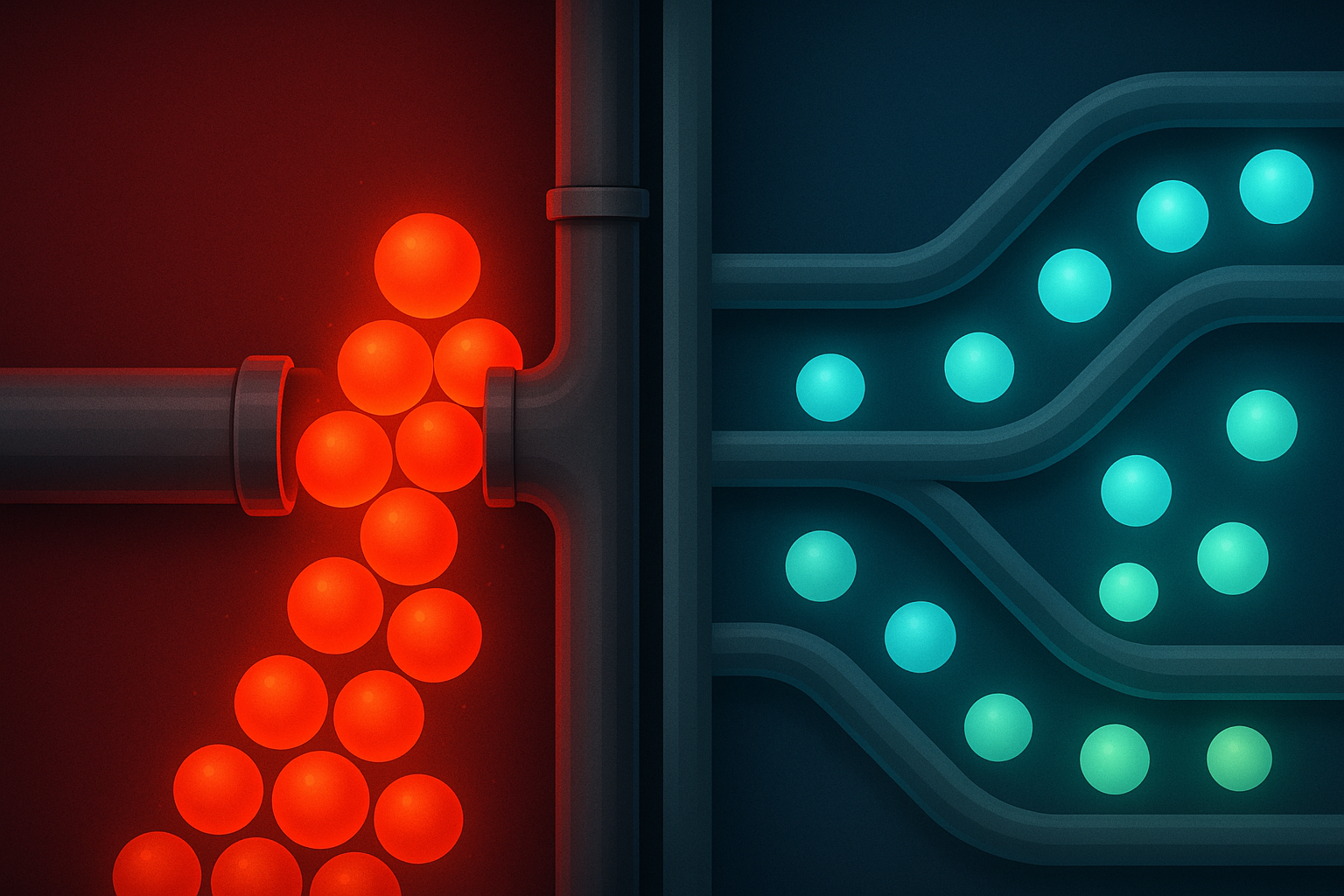

In an asynchronous execution model, task submission and task execution are decoupled. A tenant submits a task to a durable queue. A worker pool pulls tasks from that queue and dispatches them to the foundation model at a rate governed by available throughput. When throughput is constrained, tasks wait in the queue rather than holding live execution resources. Workers are only occupied when they can actually make progress.

The Core Advantage: Decoupling Acceptance from Execution

This decoupling is the architectural superpower of async execution under spike conditions. Consider what happens when the same 429-storm hits an async system:

- Model provider throttles; workers receive rate limit signals.

- Workers apply backoff and reduce dispatch rate. They release themselves back to the pool rather than blocking.

- Tasks accumulate in the durable queue, but the queue is cheap storage, not expensive compute.

- Worker pool remains responsive. New high-priority tasks can still be dispatched if capacity is available.

- As the model provider recovers throughput, workers drain the queue at the maximum sustainable rate.

- No tasks are dropped. No threads are exhausted. Tenants receive their results with increased latency but without errors.

This is a fundamentally more resilient failure mode. You trade latency for reliability, and in most enterprise agentic use cases, that is exactly the right trade.

Per-Tenant Fairness Becomes Tractable

Async queue architectures also make per-tenant fairness policies dramatically easier to implement. With a queue per tenant (or a weighted fair-queuing scheme across a shared queue), you can enforce policies like:

- Rate-limit budgets: Each tenant's queue is drained at a rate proportional to their subscription tier.

- Priority lanes: Urgent tasks from any tenant can be placed in a high-priority queue that preempts standard-tier work.

- Burst absorption: A tenant can submit a burst of tasks without impacting other tenants, because the burst is absorbed into their queue and drained at the permitted rate.

- Backpressure signaling: When a tenant's queue depth exceeds a threshold, the API layer can return a

202 Acceptedwith an estimated completion time rather than a blocking wait or an error.

This is the architecture that frameworks like Temporal, Apache Kafka-based orchestrators, and purpose-built agent platforms such as LangGraph Cloud and Prefect's agentic layer have converged on in 2026. The durable, per-tenant queue is the load-bearing structure of a scalable multi-tenant agent platform.

The Nuanced Reality: Async Is Not a Free Lunch

It would be intellectually dishonest to declare async the universal winner without cataloging its real costs. Teams that have migrated from sync to async execution in production consistently report the following challenges:

State Serialization Complexity

Async agents must be able to suspend and resume. This means every piece of agent state, including the conversation history, tool call results, intermediate reasoning steps, and execution metadata, must be serializable to durable storage between steps. For simple agents, this is manageable. For complex multi-step agents with rich in-memory state, designing a clean serialization boundary is genuinely hard work. You will encounter edge cases with non-serializable objects, circular references, and versioning mismatches when you deploy updated agent logic against in-flight tasks.

Observability Becomes Distributed

A single agent execution that spans multiple queue hops, multiple worker invocations, and multiple model calls generates a distributed trace that must be stitched together by correlation ID. Without disciplined instrumentation from day one, debugging a misbehaving async agent in production feels like forensic archaeology. Tools like Langfuse, Arize Phoenix, and Honeycomb have improved significantly in 2026 for async agent tracing, but they require deliberate integration effort.

Latency Floor Is Higher

Every queue hop adds latency. For interactive use cases where a human is waiting for an agent response, the additional serialization, queuing, and polling overhead of an async model can add hundreds of milliseconds to seconds of latency compared to a direct synchronous call. For real-time conversational agents, this is often unacceptable.

Operational Complexity

Running a production async agent platform means operating a message broker (Kafka, RabbitMQ, SQS, or a purpose-built workflow engine), managing worker pool scaling, handling dead-letter queues for failed tasks, implementing idempotency to handle worker retries safely, and building result delivery mechanisms (webhooks, polling endpoints, or push notifications). This is a meaningful increase in operational surface area compared to a synchronous HTTP service.

Head-to-Head: When the Queue Spikes, Who Wins?

Let's put both models directly side by side across the dimensions that matter most when per-tenant queues spike beyond foundation model throughput limits:

| Dimension | Synchronous | Asynchronous |

|---|---|---|

| Behavior under 429 throttling | Thread exhaustion, cascading failures | Queue depth increases; workers backoff gracefully |

| Per-tenant fairness | Difficult; requires complex thread-pool partitioning | Natural; queue-per-tenant or weighted fair queuing |

| Resource efficiency under load | Poor; idle threads hold memory and handles | Excellent; workers only active when making progress |

| Task drop risk under spike | High without explicit overflow handling | Low; durable queue absorbs bursts |

| Latency for single tasks | Lower (no queue overhead) | Higher (queue serialization adds latency floor) |

| Implementation simplicity | High; standard request/response patterns | Lower; requires broker, workers, state serialization |

| Observability complexity | Low; single trace per task | Higher; distributed traces require correlation |

| Horizontal scalability | Limited by thread/process ceiling | Near-linear; add workers to increase throughput |

| Retry and idempotency handling | Caller-managed, often inconsistent | Platform-managed, consistent across all tasks |

The verdict is clear in the spike scenario: asynchronous execution wins decisively on every resilience dimension. The only meaningful advantages synchronous execution retains are lower latency for single tasks and lower implementation complexity, neither of which are relevant when you are in the middle of a multi-tenant throughput crisis.

The Hybrid Architecture: What Production Systems Actually Look Like in 2026

The most sophisticated agentic platforms in production today do not choose one model exclusively. They implement a hybrid execution strategy that routes tasks to the appropriate model based on task characteristics:

The Routing Layer

A routing layer at task submission time classifies each incoming task and directs it to either a synchronous fast path or an asynchronous durable path based on criteria like:

- Expected duration: Tasks estimated to complete in under 5 seconds go synchronous. Tasks expected to take longer go async.

- Interactivity requirement: Tasks with a human waiting for a real-time response go synchronous. Background, batch, or triggered tasks go async.

- Current system load: Under normal conditions, borderline tasks go synchronous. When the system detects elevated queue depth or model throttling, the threshold shifts and more tasks are routed async.

- Tenant tier: Premium tenants with strict latency SLAs may have dedicated synchronous capacity with reserved model quota. Standard tenants route through the async path.

Adaptive Backpressure

The most resilient systems implement adaptive backpressure that dynamically shifts traffic between paths. When the synchronous path detects rising latency (a leading indicator of impending throttling), it begins shedding tasks to the async path before the 429 storm hits. This proactive shedding prevents the thread exhaustion cascade that kills pure synchronous systems.

Async-First Agent Design with Sync Facades

Many teams have adopted an async-first internal architecture with synchronous facades for clients that need request/response semantics. The client submits a task and receives a task ID. If the task completes within a short polling window (say, 10 seconds), the facade returns the result synchronously. If the task is still running, the facade returns a 202 with a polling URL. This pattern, sometimes called "async with sync facade" or "long polling with fallback," gives clients simple semantics while the backend operates on durable async rails.

Practical Recommendations for Platform Engineers

If you are building or operating a multi-tenant agentic platform in 2026 and you are concerned about throughput spike resilience, here is a prioritized action list:

1. Audit Your Current Architecture Honestly

Map every agent execution path and classify it as synchronous or asynchronous. Identify which paths hold live resources during model calls. These are your blast radius in a throttling event.

2. Implement Per-Tenant Queue Depth Monitoring

You cannot manage what you cannot see. Instrument your queues with per-tenant depth, age-of-oldest-task, and drain rate metrics. Set alerts at queue depth thresholds that give you time to react before SLAs are breached.

3. Externalize Your Rate Limit Budget

Use a centralized token bucket or leaky bucket rate limiter that tracks your foundation model quota consumption in real time. All workers should check this limiter before dispatching, not after receiving a 429. Reactive rate limiting (retry on 429) is far less efficient than proactive rate limiting (check before dispatch).

4. Design Agent State for Serializability from Day One

If you are still in the design phase, treat serializability as a first-class requirement for all agent state. Define clear checkpointing boundaries in your agent reasoning loops. This investment pays dividends not only for async execution but also for agent resumability, crash recovery, and long-running task support.

5. Invest in Distributed Trace Correlation

Instrument every queue publish and consume operation with trace context propagation. A task that spans three queue hops and six model calls should produce a single coherent trace in your observability platform. Without this, debugging production incidents in async systems is extremely painful.

Conclusion: The Architecture That Survives the Spike

The honest answer to the question posed in this article's title is this: asynchronous execution is the model that holds up when per-tenant task queues spike beyond foundation model throughput limits. It does so not because it is faster or simpler, but because it decouples resource commitment from execution capacity in a way that synchronous execution fundamentally cannot.

Synchronous execution remains valuable and appropriate for interactive, low-latency, single-task scenarios. But as the backbone of a multi-tenant agentic platform serving enterprise workloads, it carries structural vulnerabilities that become catastrophic exactly when you need reliability the most: during high-traffic spikes.

The practical path forward for most teams is a hybrid architecture with async-first internals, synchronous facades where client experience demands it, and a routing layer sophisticated enough to shift traffic dynamically as load conditions change. This is not a simple system to build. But it is the system that will still be standing when your three largest tenants all decide to run their quarterly batch pipelines on the same Tuesday morning.

The teams that are winning in agentic infrastructure in 2026 are the ones who stopped treating execution model as an implementation detail and started treating it as a core architectural decision. The spike will come. The question is whether your architecture is ready for it.