Redis vs. Purpose-Built Vector Memory Stores for Per-Tenant Agent State: Which Architecture Survives at Scale?

There is a quiet architectural crisis unfolding inside every serious multi-tenant LLM platform right now. As agentic AI systems move from single-session demos into persistent, cross-session workflows serving thousands of tenants simultaneously, the question of where and how you store per-tenant agent memory has shifted from an engineering footnote to a make-or-break infrastructure decision.

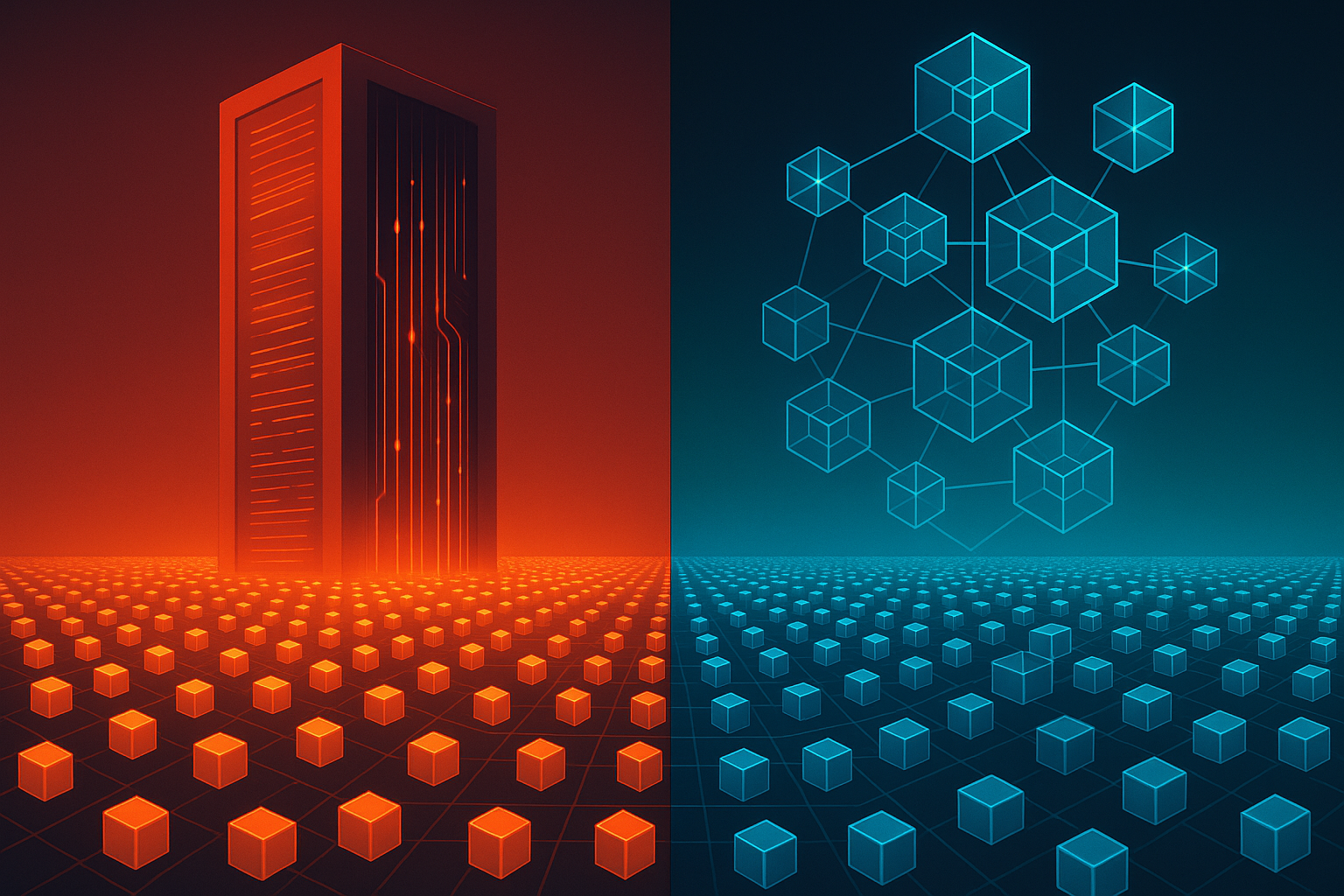

Two camps have emerged. The first reaches for Redis: fast, familiar, operationally proven, and already in the stack. The second argues that purpose-built vector memory stores like Pinecone, Weaviate, Qdrant, or Chroma are the only architectures that can handle semantic retrieval at scale without bleeding embeddings across tenant isolation boundaries. Both camps have compelling arguments. Both camps also have catastrophic failure modes that their advocates tend to underplay.

This article goes deep on both architectures, compares them across the dimensions that actually matter in production, and gives you a clear decision framework for your own platform. No hand-waving. No vendor cheerleading.

Why Per-Tenant Agent State Is a Different Problem Than Caching

Before diving into the comparison, it is worth being precise about what we mean by "per-tenant agent state." This is not session caching. This is not a simple key-value lookup for user preferences. Per-tenant agent state in a multi-tenant LLM platform encompasses:

- Episodic memory: What did this tenant's users do, say, or request across prior sessions?

- Semantic memory: What concepts, entities, and domain knowledge has the agent accumulated for this tenant's context?

- Working memory: What is the agent actively reasoning about in the current session window?

- Procedural memory: What workflows, tool-use patterns, and behavioral preferences has the agent learned for this tenant?

The cross-session retrieval requirement is what makes this genuinely hard. When a user returns after three weeks, the agent must reconstruct relevant context without a full conversation replay. That reconstruction is a semantic search problem, not a key lookup. And when you are running that problem for 10,000 tenants simultaneously, the isolation and performance constraints become severe.

Architecture One: Redis as the Per-Tenant Memory Layer

Redis has been the default "fast memory" layer for web applications for over a decade, and its appeal for agent state is obvious. Most teams already operate Redis clusters. Latency is sub-millisecond. The operational playbook is well understood. And since Redis Stack introduced native vector similarity search via the HNSW and FLAT indexing modules, the argument for using Redis as a unified working memory and retrieval layer has become genuinely attractive.

How the Redis Architecture Typically Looks

In a Redis-based per-tenant agent memory design, the common pattern involves namespacing all keys under a tenant identifier prefix (for example, tenant:{tenant_id}:memory:{memory_id}), storing embeddings as Redis vector fields, and running KNN queries scoped to a tenant's key namespace. Working memory lives in Redis hashes or JSON documents, while episodic memory is stored as vector-indexed entries that can be retrieved by semantic similarity at query time.

The architecture looks elegant on a whiteboard. A single Redis cluster handles both the fast key-value retrieval for session state and the approximate nearest-neighbor search for semantic memory. Fewer moving parts, fewer services to operate, one monitoring stack.

Where Redis Starts to Crack Under Multi-Tenant Load

The problems emerge at scale, and they emerge in ways that are deeply uncomfortable for platform operators.

Namespace isolation is logical, not physical. Redis key prefixing is a convention, not an enforcement boundary. A bug in your tenant resolution middleware, a misconfigured pipeline, or a library that drops the prefix under certain error conditions can result in one tenant's embeddings being written into or retrieved from another tenant's namespace. There is no hard wall. The database engine itself does not understand tenants; only your application code does. At scale, this is not a theoretical risk. It is a matter of when, not if.

Index management does not compose well across tenants. Redis vector indexes are defined at the index level, not the key prefix level. Running separate indexes per tenant (the only way to achieve true index-level isolation) means managing potentially thousands of individual Redis indexes, each with its own memory overhead, its own HNSW graph structure, and its own operational lifecycle. Index creation, deletion, and reindexing for a single churned tenant becomes a cluster-wide operation with measurable latency impact.

Memory is not elastic per tenant. Redis is an in-memory store. Vector embeddings for high-dimensional models (1536 dimensions for many current embedding models, 3072 for newer variants) consume significant RAM. In a multi-tenant environment, a small number of "heavy" tenants with large memory footprints can crowd out resources for all other tenants on the same cluster. Redis Cluster sharding helps, but it does not solve the noisy-neighbor problem for memory-intensive vector workloads without careful and ongoing capacity planning.

Cross-session retrieval degrades as history grows. Redis HNSW indexes are optimized for low-latency approximate search, but their accuracy (recall rate) degrades as the index grows unless you tune ef_construction and M parameters aggressively. For tenants with long operational histories, the index can become large enough that recall drops below acceptable thresholds without a rebuild, and rebuilds are expensive and blocking in production.

Architecture Two: Purpose-Built Vector Memory Stores

Purpose-built vector databases such as Pinecone, Weaviate, Qdrant, and Chroma were designed from the ground up to solve the problems that Redis treats as afterthoughts. Their core value proposition for multi-tenant LLM platforms centers on three capabilities: native namespace or collection isolation, distributed index management, and metadata-filtered semantic retrieval.

How Purpose-Built Vector Stores Handle Tenant Isolation

The isolation story varies meaningfully across vendors, and this is where the details matter enormously.

Pinecone offers namespaces within an index as a first-class primitive. Each namespace maintains a logically isolated partition of the index, and queries are scoped to a single namespace by default. The engine enforces this boundary at the query execution layer, not the application layer. For very large platforms, Pinecone's serverless tier also supports per-tenant indexes with independent scaling, though this introduces latency variability at cold-start that must be accounted for in SLA design.

Weaviate handles multi-tenancy through its native multi-tenancy feature, introduced and hardened over the past two years, which allows collections to be partitioned by tenant with each tenant's data stored in isolated shards. Tenant shards can be activated or deactivated independently, which is an extremely useful property for platforms that need to offload inactive tenants to reduce memory pressure without deleting their data.

Qdrant takes a similar approach with its payload-based filtering combined with collection-level isolation options. Its on-disk indexing capability is particularly relevant for multi-tenant deployments because it allows vector data to overflow to disk for inactive tenants while keeping hot tenants in memory, a capability Redis simply does not offer in the same way.

Chroma remains more appropriate for smaller deployments and development environments. Its multi-tenancy model is less mature than Pinecone or Weaviate for true production-scale isolation scenarios.

The Retrieval Quality Advantage

Beyond isolation, purpose-built stores offer a meaningful advantage in retrieval quality for cross-session context reconstruction. Metadata filtering combined with vector similarity search allows agents to retrieve memories that are both semantically relevant and temporally or categorically scoped. For example: "Retrieve the five most semantically similar memories to this query, but only from sessions in the last 90 days, and only tagged as user-confirmed facts rather than inferred context."

This kind of compound retrieval is awkward to implement correctly in Redis and requires careful application-level logic that introduces additional failure surfaces. In Weaviate or Qdrant, it is a single query with a filter expression evaluated at the index level, which is both faster and safer.

The Operational and Cost Overhead

The honest downside of purpose-built vector stores is that they add a service to your operational surface. You now have a vector database to provision, monitor, scale, back up, and version alongside your existing infrastructure. For teams already running Redis and not yet at scale, this overhead is real and should not be dismissed.

Cost is also a genuine consideration. Managed vector database services bill on dimensions (storage volume, query volume, index size) that can surprise teams used to Redis's simpler memory-based pricing model. A platform with 50,000 tenants each storing moderate episodic memory can generate vector storage bills that dwarf equivalent Redis memory costs, especially if the managed service charges per-query at high retrieval volumes.

The Bleeding Problem: Tenant Embedding Leakage in Practice

Let's talk about the failure mode that keeps platform architects awake at night: tenant embedding leakage. This is the scenario where one tenant's vector data becomes retrievable in the context of another tenant's queries. In an LLM platform, this is not just a data integrity bug. It is a potential compliance catastrophe, especially in regulated industries like healthcare, legal, or financial services where tenant data carries contractual and regulatory isolation requirements.

How Leakage Happens in Redis

In Redis-based architectures, leakage typically occurs through one of three mechanisms:

- Prefix resolution bugs: Application code fails to correctly resolve the tenant context under certain conditions (race conditions, middleware exceptions, fallback paths) and writes or reads from an unscoped or incorrectly scoped key.

- Shared index queries: If a single Redis vector index covers all tenants (a common shortcut taken under time pressure), a query without an explicit metadata filter will return results from all tenants. One missing filter clause is all it takes.

- Pipeline and batch processing errors: Background jobs that process embeddings in bulk are particularly prone to losing tenant context when processing queues that mix tenant data.

How Purpose-Built Stores Reduce Leakage Risk

Purpose-built stores reduce leakage risk by pushing the isolation boundary down into the storage and query execution engine. When Weaviate's multi-tenancy feature is correctly configured, a query issued against Tenant A's shard physically cannot return documents from Tenant B's shard, because the shards are separate storage units. The application does not have to remember to add a filter. The engine enforces the boundary unconditionally.

This is the architectural principle of defense in depth applied to data isolation: the system is safe even when the application layer makes a mistake, rather than requiring the application layer to be perfect at all times.

That said, purpose-built stores are not immune to misconfiguration. Incorrect collection setup, shared indexes used for cost savings, or improper namespace routing at the API gateway layer can all reintroduce leakage risk. The point is not that purpose-built stores make leakage impossible, but that they make correct isolation the default rather than a convention that must be actively maintained.

Head-to-Head Comparison: The Scorecard

Here is a direct comparison across the dimensions that matter most for production multi-tenant LLM platforms:

- Isolation boundary enforcement: Redis relies on application-layer convention. Purpose-built stores enforce isolation at the engine layer. Advantage: purpose-built stores, clearly.

- Cross-session retrieval latency: Redis wins on raw latency for small-to-medium tenant memory footprints. Purpose-built stores with on-disk indexing introduce higher p99 latency for cold tenants. Advantage: Redis for hot-path retrieval, purpose-built for large-scale recall accuracy.

- Operational complexity: Redis is simpler to operate for teams already running it. Purpose-built stores add a new service dependency. Advantage: Redis for smaller teams, purpose-built for larger organizations with dedicated infrastructure teams.

- Recall accuracy at scale: Purpose-built stores maintain higher recall rates as tenant memory grows due to better index management and tuning options. Advantage: purpose-built stores.

- Metadata-filtered retrieval: Purpose-built stores handle compound vector-plus-filter queries natively and efficiently. Redis requires application-level workarounds. Advantage: purpose-built stores.

- Tenant lifecycle management (onboard/offboard): Purpose-built stores with native multi-tenancy support tenant activation and deactivation without cluster-wide impact. Redis requires careful key management and index deletion. Advantage: purpose-built stores.

- Cost at low tenant count: Redis is cheaper and simpler for platforms with fewer than a few thousand tenants. Advantage: Redis.

- Cost at high tenant count: Managed vector databases can become expensive at very high tenant counts with large memory footprints. Advantage: situational; requires modeling.

- Compliance and audit readiness: Purpose-built stores with physical shard isolation are significantly easier to audit for data residency and tenant isolation compliance. Advantage: purpose-built stores.

The Hybrid Architecture: What Production Systems Actually Use

Here is the reality that neither camp likes to advertise: the most resilient multi-tenant LLM platforms in production today do not choose one or the other. They use both, layered by memory type and access pattern.

The pattern that has emerged as a pragmatic standard looks roughly like this:

- Redis for working memory and session state: Current session context, tool call results, short-term agent scratchpad, and active conversation history live in Redis. This data is ephemeral, high-throughput, and does not require semantic retrieval. Redis is the right tool here.

- Purpose-built vector store for episodic and semantic memory: Anything that needs to survive session boundaries and be retrieved semantically goes into a purpose-built store with proper tenant isolation. This is the long-term memory layer.

- A memory consolidation pipeline between the two: At session end (or at configurable checkpoints), a background process distills the session's Redis working memory into vector embeddings and writes them to the tenant's isolated namespace in the purpose-built store. This pipeline is where tenant context must be carefully preserved and validated.

This hybrid approach gives you Redis's latency advantages for in-session operations while giving you the isolation guarantees and semantic retrieval quality of a purpose-built store for cross-session context reconstruction. The tradeoff is operational complexity: you now have two memory systems to keep synchronized, and the consolidation pipeline is a critical path that requires robust error handling and tenant context validation at every step.

Decision Framework: Which Architecture Is Right for Your Platform?

Use this framework to guide your decision based on where your platform actually sits today:

Choose Redis-only if: You have fewer than 5,000 tenants, your compliance requirements do not mandate physical data isolation, your team does not have bandwidth to operate an additional data service, and your per-tenant memory footprint is small and predictable.

Choose a purpose-built vector store as your primary memory layer if: You are operating at scale with thousands to hundreds of thousands of tenants, you serve regulated industries with strict data isolation requirements, your tenants have long operational histories that require high-recall semantic retrieval, or you have experienced or are concerned about embedding leakage incidents.

Choose the hybrid architecture if: You need sub-millisecond in-session performance combined with reliable cross-session semantic retrieval, your platform is growing rapidly and you expect to hit scale thresholds within the next 12 to 18 months, or you are building a platform where tenant trust and compliance are core product differentiators.

Conclusion: The Right Question Is Not Which Tool, But Where the Boundary Lives

The Redis vs. purpose-built vector store debate is ultimately a debate about where you want your tenant isolation boundary to live. In Redis, that boundary lives in your application code, which means it is only as reliable as your most tired engineer's last pull request. In a purpose-built vector store with native multi-tenancy, that boundary lives in the storage engine, which means it holds even when your application layer fails.

For multi-tenant LLM platforms where tenant data is sensitive, where cross-session context retrieval is a core product feature, and where scale is the goal rather than the exception, the architecture that survives is the one where isolation is enforced by the infrastructure rather than promised by the application. Purpose-built vector memory stores, used correctly and layered intelligently with Redis for hot-path working memory, represent the more defensible long-term architecture.

The cost of getting this wrong is not a slow query or a cache miss. It is one tenant reading another tenant's business context through an AI agent. That is not a bug you patch in a hotfix. That is a trust event that ends customer relationships. Architect accordingly.