Reactive vs. Proactive AI Agent Observability: Which Monitoring Philosophy Actually Catches Multi-Tenant Workflow Failures Before They Reach the Foundation Model Layer

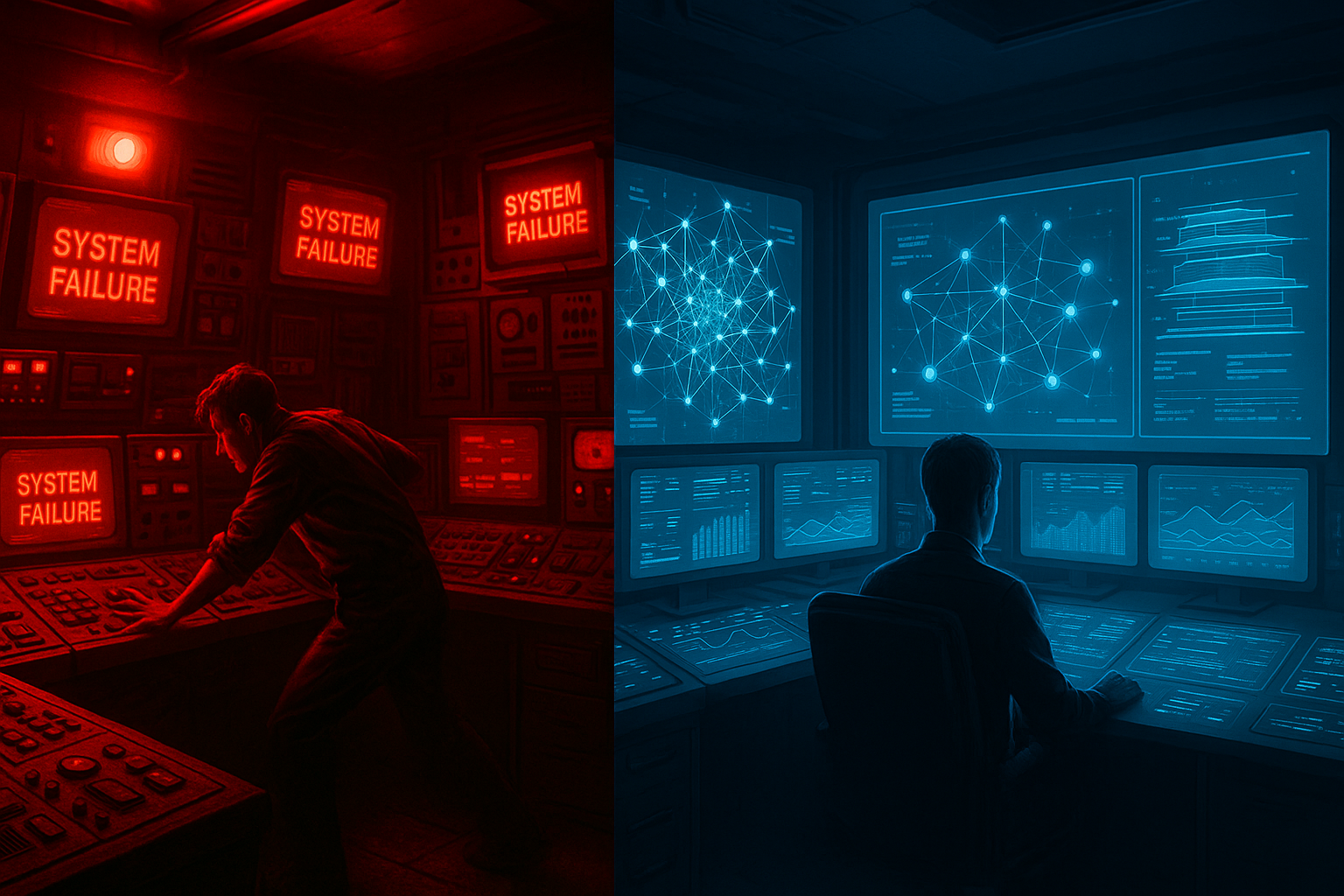

There is a quiet crisis unfolding inside enterprise AI stacks right now. Multi-tenant agentic workflows are failing in ways that traditional observability tooling was never designed to catch. By the time an alert fires, the damage is already done: a corrupted context window has been handed to your foundation model, a tool-call chain has silently hallucinated its way through three downstream services, or a tenant's session state has bled into another's reasoning loop. The bill arrives at the foundation model layer, but the crime was committed several steps upstream.

The debate between reactive and proactive AI agent observability is not just an academic one. In 2026, with agentic pipelines running mission-critical workloads across healthcare, legal, fintech, and enterprise SaaS, choosing the wrong monitoring philosophy is not a configuration mistake. It is an architectural liability. This article breaks down both philosophies head-to-head, examines where each one succeeds and catastrophically fails, and argues for a specific approach that the most resilient teams are quietly adopting today.

Why Multi-Tenant AI Workflows Break Differently Than Everything Else

Before comparing philosophies, it is worth establishing exactly why multi-tenant agentic systems represent a uniquely hostile environment for monitoring. Unlike a traditional microservices mesh, an AI agent workflow has several properties that make failure modes deeply non-linear:

- Non-determinism at every hop: The same input routed through the same agent graph can produce different tool calls, different memory retrievals, and different sub-agent delegations on each run. Thresholds and baselines that work in deterministic systems become noise in agentic ones.

- Shared context surfaces: In multi-tenant deployments, vector stores, retrieval-augmented generation (RAG) indexes, and memory buffers are frequently partitioned at the application layer rather than the infrastructure layer. A misconfigured tenant filter is invisible to infrastructure-level monitoring but catastrophic at runtime.

- Cascading tool-call amplification: A single bad agent decision at step two of a twelve-step workflow can exponentially amplify token consumption, API costs, and downstream side effects by the time execution reaches step nine. Reactive systems almost always catch this at step nine.

- Latent semantic failures: Some of the most dangerous failures produce no error codes, no exceptions, and no anomalous latency. The agent completes successfully. The output is just wrong in ways that only matter three days later when a human reviews it.

These four properties define the battlefield. Now let us look at how each philosophy performs on it.

Reactive AI Agent Observability: The Established Standard

What It Is

Reactive observability is the dominant paradigm inherited from traditional software engineering. It operates on a collect-then-alert model: gather logs, traces, and metrics from running systems, define thresholds or anomaly detection rules, and trigger notifications when something crosses a boundary. In the AI agent context, this typically means capturing LLM call latencies, token counts, tool invocation logs, error rates, and cost metrics, then surfacing dashboards and alerts when values deviate from expected ranges.

Tools like LangSmith, Arize Phoenix, Helicone, and the observability modules baked into frameworks like LangGraph and AutoGen all operate primarily within this paradigm. They are excellent at answering the question: what happened?

Where Reactive Observability Genuinely Shines

Reactive monitoring is not without real merit in agentic systems. It excels in several specific scenarios:

- Post-incident forensics: Full trace capture across agent steps, tool calls, memory reads and writes, and model responses gives engineers an extraordinarily detailed picture of what occurred. For compliance-heavy industries, this audit trail is non-negotiable.

- Cost attribution in multi-tenant environments: Reactive systems can accurately bill-back token consumption, compute time, and third-party API calls to specific tenants after the fact. This is a genuine business requirement that reactive tooling handles well.

- Known failure pattern detection: Once a failure mode has been observed and catalogued, reactive systems can be tuned to catch it reliably. If tenant A's RAG pipeline has historically failed when retrieval scores drop below 0.62, you can set that threshold and catch recurrences quickly.

- Baseline establishment: You cannot build proactive systems without first understanding what normal looks like. Reactive observability is the essential first layer for generating the behavioral baselines that proactive systems consume.

The Core Failure Mode: The Foundation Model Tax

Here is where reactive observability breaks down in multi-tenant agentic architectures. Consider a realistic failure scenario in a legal document review agent serving fifty enterprise tenants simultaneously.

At 2:14 AM, a background indexing job for Tenant 17 writes a malformed metadata record into a shared vector store partition. The partition filter logic has a subtle bug that only triggers when the tenant's document count exceeds a specific threshold, which it crossed during the overnight batch. At 9:03 AM, an analyst at Tenant 22 submits a contract review request. The agent's retrieval step pulls three documents from Tenant 17's partition due to the filter bug. The agent proceeds to reason over this contaminated context. By 9:04 AM, the malformed context has been submitted to the foundation model. The model, working with what it was given, produces a confidently wrong legal summary. The reactive monitoring system fires an alert at 9:47 AM when a human reviewer flags the output as incorrect.

The reactive system did its job. It caught the failure. But the failure had already reached the foundation model layer forty-three minutes earlier. In a regulated environment, that single bad inference event can trigger a compliance review, a client notification obligation, and a reputational incident. Reactive observability caught the symptom. The cause had already propagated.

This is what we call the Foundation Model Tax: the cost, in tokens, money, latency, and risk, of allowing a failure to propagate all the way to the model before it is detected. In high-volume multi-tenant systems, this tax compounds rapidly.

Proactive AI Agent Observability: The Emerging Discipline

What It Is

Proactive observability shifts the detection boundary upstream. Rather than waiting for a failure to manifest in outputs or metrics, it instruments the preconditions and intermediate state transitions of agent workflows to identify failure trajectories before they reach irreversible execution points. The core question changes from what happened? to what is about to happen, and should we allow it?

This is a fundamentally different architectural posture. It requires treating agent workflows not as black boxes to be observed from the outside, but as state machines whose internal transitions can be validated, scored, and intercepted in real time.

The Four Pillars of Proactive Agent Observability

1. Pre-Execution Context Validation

Before any agent step submits a context payload to a tool or a foundation model, a validation layer scores the payload for integrity. This includes tenant isolation checks (does this context contain data artifacts that belong to a different tenant?), semantic coherence scoring (does the retrieved context actually relate to the user's intent?), and injection pattern detection (does the context contain adversarial prompt structures that may have been embedded in retrieved documents?). Failures here abort the step before it executes, not after.

2. Trajectory Anomaly Detection

In a well-instrumented agentic system, you can build probabilistic models of what a healthy workflow trajectory looks like for a given task class. A contract review workflow that normally involves four tool calls and two retrieval steps should raise a flag if it is suddenly on its seventh tool call with no retrieval steps completed. Trajectory anomaly detection does not wait for the workflow to finish. It evaluates the execution path in real time and can pause, reroute, or terminate workflows that are diverging from expected behavioral envelopes.

3. Tenant Isolation Continuous Verification

Rather than trusting that partition logic is correctly applied at indexing time, proactive systems re-verify tenant isolation at every retrieval boundary during execution. This is implemented as a lightweight cryptographic tagging scheme or a secondary metadata verification pass that runs in the retrieval pipeline itself, not just in the application layer. Any document or memory artifact that cannot be positively verified as belonging to the requesting tenant is quarantined before it enters the agent's context window.

4. Semantic Drift Alerting in Long-Running Sessions

Multi-tenant agentic systems increasingly support long-running, stateful sessions that persist across hours or days. These sessions accumulate context, and that context can drift semantically in ways that corrupt the agent's reasoning without producing any discrete error event. Proactive systems embed periodic semantic coherence checks into session management, comparing the current session state against the original task intent and flagging sessions where the agent's working context has drifted beyond a configurable semantic distance threshold.

The Honest Costs of Going Proactive

Proactive observability is not a free upgrade. It comes with real engineering costs that teams need to budget for honestly:

- Latency overhead: Pre-execution validation and trajectory scoring add latency to every agent step. In latency-sensitive workflows, this overhead must be carefully optimized. A poorly implemented proactive layer can add 80 to 200 milliseconds per step, which compounds across multi-step pipelines.

- False positive management: Trajectory anomaly detection requires careful calibration. An overly aggressive anomaly detector will interrupt legitimate but unusual workflows, degrading user experience and eroding trust in the monitoring system itself.

- Baseline cold-start problem: Proactive systems need behavioral baselines to function. New tenants, new task types, and new agent configurations all require a warm-up period during which proactive detection is less effective. Teams must plan for hybrid operation during this period.

- Instrumentation depth requirement: Proactive observability requires deep framework-level instrumentation. If your agent framework does not expose intermediate state transitions cleanly, you will need to build or extend instrumentation hooks yourself. This is non-trivial for teams running heterogeneous agent stacks.

Head-to-Head: The Decision Matrix

Let us put both philosophies side by side across the dimensions that matter most in multi-tenant agentic deployments:

- Failure detection timing: Reactive catches failures after foundation model submission. Proactive catches failures before submission, at the context assembly or tool-call planning stage.

- Tenant isolation assurance: Reactive relies on post-hoc log analysis to detect cross-tenant contamination. Proactive enforces isolation as a runtime invariant at every retrieval boundary.

- Novel failure mode coverage: Reactive requires prior observation of a failure pattern to detect it reliably. Proactive trajectory anomaly detection can surface novel failure modes by identifying behavioral deviations from established baselines, even without a prior incident.

- Compliance and audit: Reactive wins here. Full trace capture and immutable audit logs are better served by reactive architectures. Proactive systems should complement, not replace, this capability.

- Cost containment: Proactive wins decisively. By aborting runaway tool-call chains and malformed context submissions early, proactive monitoring can reduce wasted foundation model token spend by 30 to 60 percent in high-failure-rate workflows, based on internal benchmarks reported by teams running large-scale agentic deployments.

- Engineering complexity: Reactive is significantly simpler to implement and maintain. Proactive requires dedicated investment in instrumentation infrastructure, baseline modeling, and calibration workflows.

- Latency impact: Reactive has near-zero runtime overhead. Proactive introduces measurable but manageable overhead when properly optimized.

The Architecture That Actually Works in 2026: Layered Observability

The teams shipping the most reliable multi-tenant agentic systems in 2026 are not choosing between reactive and proactive observability. They are implementing them as complementary layers with clearly defined responsibilities. Here is what that architecture looks like in practice:

Layer 1: Proactive Pre-Flight Checks (Per Agent Step)

Every agent step that touches a retrieval system, a tool call, or a context assembly operation passes through a lightweight pre-flight validation layer. This layer runs tenant isolation verification, basic semantic coherence scoring, and injection pattern detection. It is synchronous, fast (target under 20 milliseconds), and has a clear abort-or-proceed output. This is your first line of defense against the Foundation Model Tax.

Layer 2: Proactive Trajectory Monitoring (Per Workflow Execution)

A separate asynchronous process evaluates the running execution trajectory against behavioral baselines for the current task class and tenant profile. This layer does not block execution but can issue pause signals to the orchestration layer when trajectory anomaly scores exceed configurable thresholds. It operates on a slightly longer time horizon than pre-flight checks, evaluating patterns across multiple steps rather than individual operations.

Layer 3: Reactive Full-Trace Capture (Per Session)

Every agent step, tool call, model invocation, memory operation, and output is captured in full and stored in an append-only trace store. This layer supports post-incident forensics, compliance auditing, cost attribution, and the ongoing baseline generation that feeds Layers 1 and 2. It is the foundation that makes the proactive layers smarter over time.

Layer 4: Reactive Alerting and Dashboards (Operational)

Traditional threshold and anomaly-based alerting operates over the trace data from Layer 3. This layer catches failures that Layers 1 and 2 missed, supports operational monitoring by on-call engineers, and provides the business-level metrics that stakeholders need. It is the safety net, not the primary defense.

Signals That Your Team Is Under-Invested in Proactive Observability

If any of the following are true for your agentic deployment, you are likely paying a significant and unnecessary Foundation Model Tax:

- Your team discovers multi-tenant data contamination incidents through user reports rather than automated detection.

- Your primary observability metric for agent quality is output error rate rather than context integrity rate.

- Runaway tool-call loops are terminated by token budget exhaustion rather than trajectory anomaly detection.

- Your tenant isolation guarantees are documented at the application layer but not verified at runtime during retrieval operations.

- You have no semantic coherence baseline for any of your long-running agent sessions.

- Your observability tooling was designed for LLM API monitoring and has not been extended to cover multi-step agent orchestration state.

Conclusion: The Philosophy Determines the Blast Radius

Reactive observability is not wrong. It is incomplete. In a world where AI agents are executing consequential multi-step workflows across shared infrastructure serving dozens or hundreds of tenants simultaneously, the question is not whether you will have failures. You will. The question is where in the execution pipeline those failures are caught.

Every step a failure travels before detection increases its blast radius: more tokens wasted, more downstream services affected, more tenant data potentially exposed, more compliance risk accumulated. The foundation model layer is the most expensive place in your entire pipeline to discover that something went wrong three steps ago. Proactive observability is the engineering discipline of systematically moving the detection boundary upstream, closer to the origin of failure and further from the point of no return.

The teams building the most reliable agentic systems in 2026 are not waiting for their foundation model to tell them something went wrong. They are building systems that know something is about to go wrong and act on that knowledge before the bill arrives. That is not just a better monitoring strategy. It is a fundamentally different relationship with failure, and in multi-tenant agentic infrastructure, that relationship is the difference between an incident and an architecture.