FAQ: Why Are Backend Engineers Scrambling to Build Per-Tenant AI Agent Consent and Data Residency Enforcement Pipelines in Q2 2026, and What Does a Legally Defensible Cross-Border Agentic Workflow Architecture Actually Look Like?

If you have spent any time in backend engineering Slack channels, conference hallways, or architecture review meetings in Q2 2026, you have probably noticed a recurring theme: engineers are not just building AI agents anymore. They are scrambling to build the governance scaffolding around them. Consent enforcement, per-tenant data residency, cross-border workflow routing, audit trails that satisfy lawyers rather than just logging dashboards. The pressure is real, the deadlines are tighter than ever, and the regulatory landscape has shifted dramatically in the past twelve months.

This FAQ is designed to cut through the noise. Whether you are a senior backend engineer trying to convince your CTO to invest in the right infrastructure, a platform architect drafting a compliance-ready design doc, or a technical lead whose legal team just handed you a 40-page questionnaire about your AI pipeline, this article has you covered.

Q1: What exactly changed in the regulatory landscape that is making this so urgent right now?

A lot changed, and it happened faster than most engineering teams anticipated. Here is the short version:

- The EU AI Act's Tier 2 obligations came into full enforcement in early 2026. High-risk and general-purpose AI systems deployed in the EU now face mandatory transparency, consent logging, and human-oversight requirements. Agentic systems that take autonomous actions on behalf of users fall squarely into scrutiny territory, and enforcement actions have already begun in Germany and France.

- Brazil's LGPD and India's DPDP Act both issued updated guidance in late 2025 that explicitly addressed automated decision-making agents. These updates require that any AI system processing personal data on behalf of a data principal must be able to demonstrate which consent artifact authorized which specific action, at the time of that action.

- The US state patchwork got worse, not better. By Q1 2026, seventeen US states had enacted their own AI-specific privacy amendments, with conflicting definitions of "automated processing," "sensitive inference," and "cross-context data sharing." Multi-tenant SaaS platforms serving US enterprise customers now routinely deal with three to five different state regimes simultaneously.

- Agentic AI changed the threat model entirely. Earlier compliance frameworks were designed around request-response systems. An agent that autonomously browses, retrieves, summarizes, writes, and posts content across multiple external APIs in a single workflow session is a fundamentally different beast. A single agentic task can touch ten jurisdictions in under two seconds.

The net result: legal and compliance teams that were previously content with a checkbox in a privacy policy are now demanding that engineering teams produce machine-readable, per-action consent provenance. That is a very different engineering problem.

Q2: What is "per-tenant consent enforcement" and why can't you just handle it at the application layer?

Per-tenant consent enforcement means that every AI agent action, every tool call, every data retrieval, and every external API invocation is gated against a consent record that is specific to the tenant (the organization or end-user) on whose behalf the action is being taken. It is not a global feature flag. It is not a single GDPR banner. It is a runtime policy engine that evaluates, at the moment of execution, whether the current action is authorized by a valid, in-scope consent artifact for this specific tenant.

The reason you cannot just handle this at the application layer comes down to three problems:

1. Agent workflows are non-deterministic by nature

When a large language model drives a tool-use loop, the sequence of tool calls is not known at request time. The agent decides, mid-flight, to call a CRM API, then a document search index, then an external enrichment service. A traditional application-layer consent check at the entry point of the request cannot anticipate which downstream tools will be invoked. You need enforcement that travels with the execution context, not just at the front door.

2. Multi-tenant platforms share infrastructure

In a SaaS platform serving hundreds of enterprise tenants, the same agent runtime, the same vector database, the same tool registry may serve Tenant A (a German healthcare company with strict GDPR health-data restrictions) and Tenant B (a US fintech with CCPA and SOC 2 requirements) simultaneously. Application-layer logic that conflates these contexts is a compliance disaster waiting to happen. The enforcement layer must be tenant-isolated at the infrastructure level, not just the code level.

3. Audit requirements demand immutability

Regulators in the EU and Brazil are now asking for audit logs that prove, after the fact, that a specific consent record was evaluated before a specific action was taken. An application-layer check that writes a log line to a mutable database does not satisfy this. You need cryptographically signed, append-only consent evaluation receipts tied to agent execution traces.

Q3: What is data residency enforcement in the context of agentic workflows, and why is it so hard?

Data residency enforcement means ensuring that data belonging to a tenant in a specific jurisdiction is processed, stored, and transmitted only within that jurisdiction (or a set of approved jurisdictions defined by the tenant's data processing agreement). For a traditional REST API, this is relatively straightforward: route requests to the right regional endpoint, store records in the right regional database cluster, done.

For agentic workflows, it becomes exponentially more complex for the following reasons:

- Tool calls are implicit data transfers. When an agent calls a web search tool, it may be sending a query derived from a user's personal data to a third-party search provider whose servers are in a different country. That is a cross-border data transfer, and it may require a legal basis under GDPR or LGPD. Most agent frameworks do not model this at all.

- LLM inference itself is a data residency question. If your agent sends a prompt containing a European user's personal data to an LLM inference endpoint hosted in the United States, you have potentially violated data residency requirements unless you have a valid transfer mechanism (Standard Contractual Clauses, an adequacy decision, or a regional inference endpoint). As of 2026, most major LLM providers offer regional inference, but engineers must explicitly route to the correct endpoint per tenant, not rely on defaults.

- Memory and context stores are often global by default. Agent memory systems, vector stores for retrieval-augmented generation, and session context databases are frequently deployed as global services for performance reasons. Tenant-specific data written into a global vector index by a German tenant's agent session may be co-mingled with data from other tenants in ways that violate residency requirements.

- Multi-step workflows cross boundaries mid-execution. An agent workflow that starts in an EU-compliant environment may invoke a sub-agent or a third-party plugin that runs in a US data center. Without explicit boundary enforcement at each hop, residency guarantees collapse.

Q4: What does a legally defensible cross-border agentic workflow architecture actually look like?

This is the big question, and the honest answer is that no single vendor has shipped a complete, off-the-shelf solution as of Q2 2026. Most engineering teams are assembling this from components. Here is the reference architecture that leading platform teams are converging on:

Layer 1: The Tenant Policy Store

Every tenant gets a structured policy record that encodes: approved data residency regions, consented data categories, approved tool/integration allowlist, data retention limits, and human-in-the-loop (HITL) thresholds. This policy store is the source of truth. It is versioned, auditable, and immutable (append-only with timestamps). Think of it as a per-tenant "AI Constitution" that the rest of the system enforces.

Practically, this is often built as a combination of a policy-as-code framework (Open Policy Agent is the most common choice in 2026) and a consent management database that maps consent artifacts (signed user agreements, DPA records, data subject authorizations) to specific data categories and processing purposes.

Layer 2: The Consent Evaluation Middleware

This is a sidecar or interceptor layer that sits between the agent orchestrator and every tool, API, or data source the agent can call. Before any tool invocation, the middleware evaluates three questions:

- Does the current tenant's active consent record authorize this tool call for this data category and processing purpose?

- Does the target tool's data residency region comply with the tenant's residency policy?

- Is this action within the agent's delegated authority scope (i.e., did the user or tenant admin explicitly authorize this class of autonomous action)?

If any check fails, the tool call is blocked, a structured refusal is returned to the agent, and a denial event is written to the immutable audit log. The agent is expected to handle refusals gracefully, either by attempting an alternative compliant tool or by escalating to a human operator.

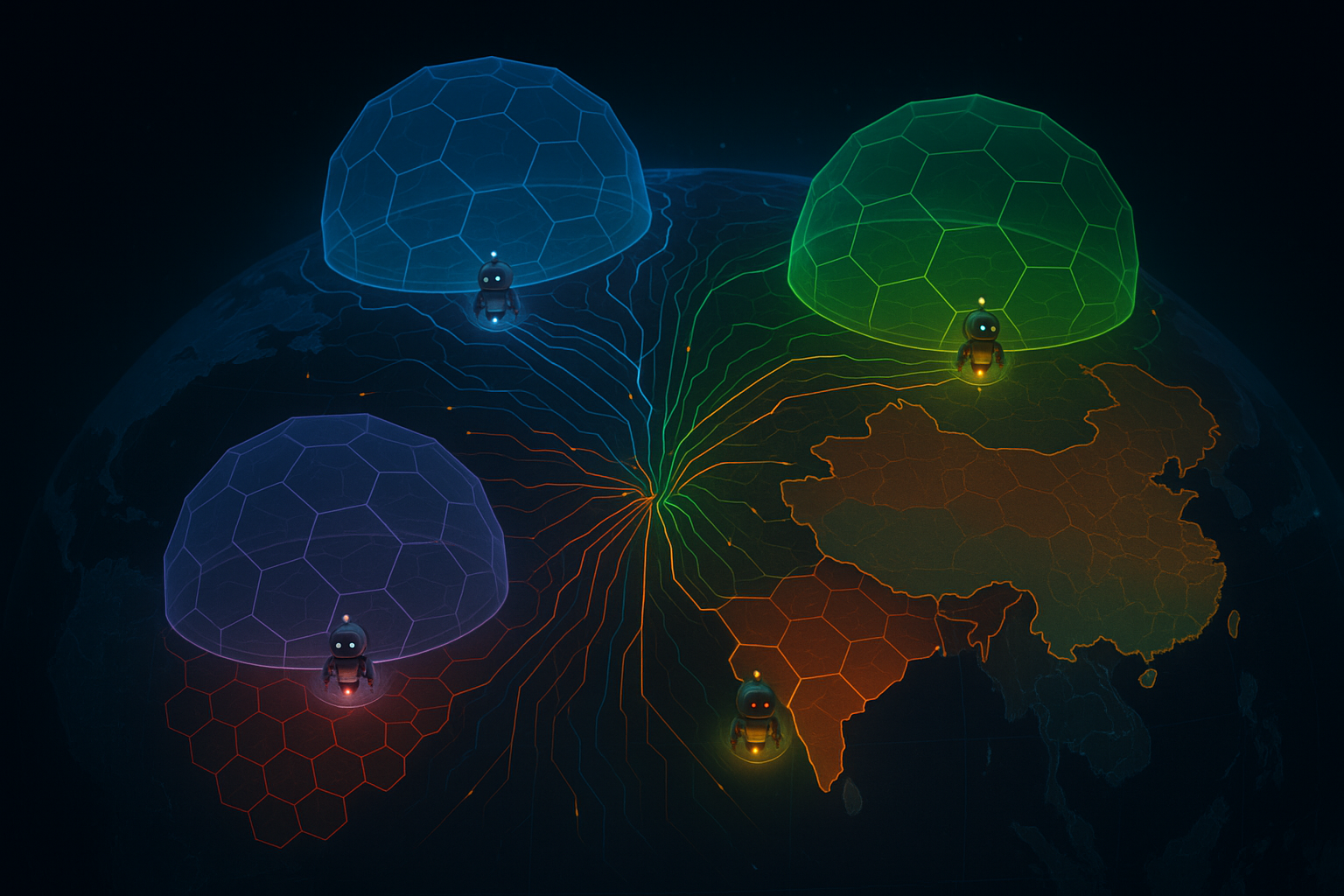

Layer 3: Regional Execution Envelopes

Rather than running all agent workloads in a single global compute environment, compliant architectures in 2026 use regional execution envelopes: isolated compute contexts (typically Kubernetes namespaces or separate clusters) deployed within specific geographic regions, with strict egress controls that prevent data from leaving the envelope boundary without an explicit, policy-authorized transfer event.

When an agent workflow is initiated for a tenant with EU residency requirements, the orchestrator selects the EU execution envelope. LLM inference is routed to a regional endpoint. Tool calls that would require data to leave the EU envelope are either blocked or routed through an approved transfer mechanism, with the transfer event logged.

Layer 4: The Immutable Audit Trail

Every consent evaluation, every tool invocation, every data transfer event, and every HITL escalation is written to an append-only audit log. In production-grade implementations, these events are cryptographically chained (similar to a blockchain-style hash chain, but without the distributed consensus overhead) so that tampering is detectable. Each log entry includes: tenant ID, agent session ID, action type, consent artifact ID evaluated, residency region of the target, timestamp, and the policy decision (allow or deny).

This audit trail is what your legal team actually needs when a regulator comes knocking. It is not a Kibana dashboard. It is a tamper-evident record of policy enforcement that can be exported as a structured report.

Layer 5: Human-in-the-Loop Escalation Gates

Legally defensible agentic architectures do not assume that agents should always proceed autonomously. The tenant policy store defines thresholds at which an agent must pause and request human authorization before proceeding. Common triggers include: accessing data categories marked as sensitive (health, financial, biometric), taking irreversible external actions (sending emails, submitting forms, executing financial transactions), and operating in jurisdictions where the tenant's legal team has flagged elevated regulatory risk.

HITL gates are not a UX afterthought. They are a compliance control, and they need to be logged with the same rigor as automated consent evaluations.

Q5: What are the most common architectural mistakes teams are making right now?

Based on patterns visible across the industry in early 2026, here are the failure modes that keep appearing:

- Bolting compliance onto an existing agent framework as an afterthought. Teams that built their agent orchestration layer without residency and consent in mind are now trying to retrofit it. This almost never works cleanly. The enforcement logic ends up scattered across application code, it is inconsistently applied, and it cannot produce the structured audit artifacts that regulators require.

- Treating consent as a one-time event. Consent records expire, get revoked, get narrowed in scope. An architecture that evaluates consent once at session start and then assumes it remains valid for the duration of a long-running agent workflow is legally fragile. Consent must be re-evaluated at each material action, especially for workflows that span hours or days.

- Ignoring the LLM inference transfer problem. Many teams have correctly addressed data residency for their databases and APIs but completely overlooked the fact that sending a prompt to an LLM is itself a data transfer. This is one of the most common gaps that legal reviews are catching in 2026.

- Building residency enforcement at the storage layer only. Storing data in the right region is necessary but not sufficient. Data can leave its residency region through compute (running a job that reads the data in the wrong region), through tool calls (passing data as a parameter to a non-compliant tool), or through the LLM context window (including the data in a prompt sent to an out-of-region inference endpoint). All three vectors need to be controlled.

- Underinvesting in the tenant policy management UX. The most technically sound enforcement pipeline is useless if tenant admins cannot correctly configure their residency and consent policies. Misconfigured policies are the leading cause of compliance incidents in multi-tenant agentic platforms. Invest in clear, well-documented policy management interfaces.

Q6: What tools and frameworks are teams actually using to build this in 2026?

The ecosystem is still maturing, but here is what is seeing real production adoption:

- Open Policy Agent (OPA): The dominant choice for policy-as-code enforcement. Teams are writing Rego policies that encode per-tenant consent and residency rules, then evaluating them as a sidecar alongside agent orchestrators.

- Regional LLM inference endpoints: All major LLM providers (including OpenAI, Anthropic, Google, and Mistral) now offer regional inference options. Compliant architectures use a routing layer that selects the correct regional endpoint based on tenant policy, rather than using a global default.

- Immutable audit logging with Apache Kafka + WORM storage: Kafka-based event streams feeding into write-once, read-many (WORM) object storage (AWS S3 Object Lock, Azure Immutable Blob Storage, GCS Object Retention) are the most common pattern for tamper-evident audit trails.

- LangGraph and similar stateful agent frameworks: Teams are building consent and residency checks as first-class nodes in their agent graphs, so enforcement is part of the workflow definition rather than an external wrapper.

- Consent management platforms with API-first designs: Platforms like Didomi, OneTrust, and newer purpose-built AI consent management tools are being integrated via API into agent middleware layers to provide real-time consent record lookup.

Q7: How should engineering teams prioritize this work if they are starting from scratch?

If your team is starting from zero on this, here is a pragmatic sequencing:

- Audit your current agent tool surface area first. Before writing any enforcement code, map every tool, API, and data source your agents can currently call. For each one, identify: what data categories it touches, where it processes data, and whether it is covered by your existing DPAs. This audit will reveal your highest-risk gaps immediately.

- Build the tenant policy store before the enforcement layer. You cannot enforce what you have not defined. Get the policy data model right first, even if your initial enforcement is simple. A well-designed policy store is the foundation everything else builds on.

- Implement LLM inference routing early. This is the highest-impact, lowest-effort win. Routing LLM calls to regional endpoints based on tenant policy eliminates one of the most common compliance gaps with relatively minimal engineering effort.

- Add consent middleware to your most sensitive workflows first. You do not need to enforce consent on every agent tool call on day one. Start with the workflows that touch sensitive data categories or take irreversible external actions. These are your highest legal risk areas.

- Get your audit trail in place before your first enterprise customer audit. Enterprise customers with their own compliance obligations will ask for audit reports. Build the immutable logging infrastructure early, even if the data in it is initially sparse. It is much harder to reconstruct audit history retroactively.

Conclusion: This Is Not a Compliance Tax. It Is a Competitive Moat.

It is tempting to frame per-tenant consent enforcement and data residency pipelines as pure compliance overhead: expensive, unglamorous infrastructure that exists only to satisfy lawyers and regulators. That framing is a mistake.

In Q2 2026, enterprise buyers evaluating agentic AI platforms are asking harder technical questions than they were eighteen months ago. Procurement teams at large financial institutions, healthcare organizations, and public sector agencies are running detailed technical due diligence on AI vendor architectures. The teams that can produce a clear, credible answer to "show me how your system enforces my data residency requirements at the agent execution layer" are winning deals. The teams that cannot are being disqualified.

Beyond sales, there is a deeper point: the engineering discipline required to build legally defensible agentic architectures, immutable audit trails, per-tenant policy enforcement, regional execution isolation, is the same discipline that produces more reliable, more observable, and more maintainable systems in general. The compliance pressure is real and it is uncomfortable. But the teams that meet it head-on are building platforms that will be trusted at scale. That trust is the moat.

The scramble is real. The architecture is achievable. Start with the policy store, enforce at the execution layer, and log everything.